KI DESIGN NATIONAL ELECTION SOCIAL MEDIA MONITORING PLAYBOOK — PART IV of V

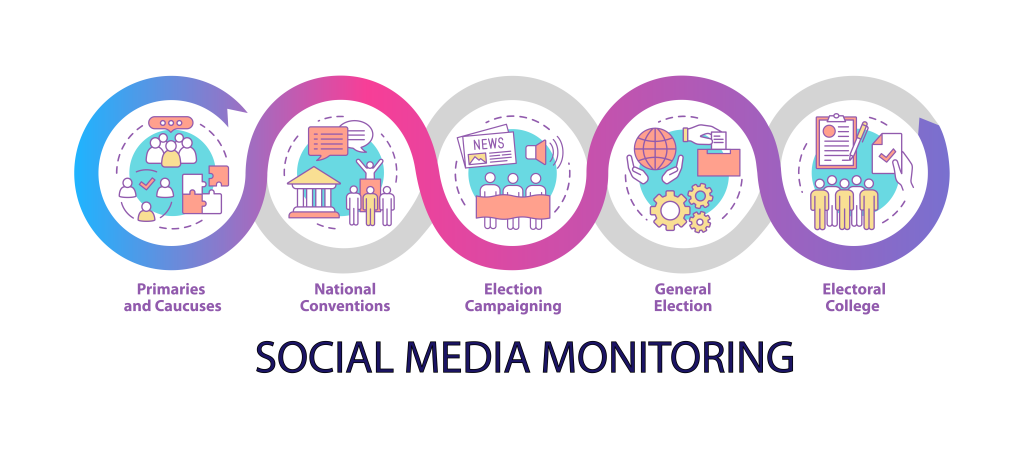

Monitoring Online Discourse During An Election: A 5-Part Series How to monitor social media with AI-based tools during an election campaign Traditional election monitoring is a formalized process in democratic countries, set out in the mandate of the national Electoral Management Body (EMB). As social and digital cultures change, however, EMBs are finding it useful…

Wael Hassan

MANAGING OPERATIONAL ISSUES DURING AN ELECTION — PART III of V

Monitoring Online Discourse During An Election: A 5-Part Series The advantages of managing election logistical issues through social media. Organizing the logistics of an election is a complex process. It’s a question of scale; the sheer numbers involved – of voters, of polling options and locations, and of election materials – means that things can,…

Wael Hassan

IDENTIFYING DISINFORMATION — PART II of V

Monitoring Online Discourse During An Election: A 5-Part Series Using AI to track disinformation during an election campaign. How can online disinformation be identified and tracked? KI Design provided social media monitoring solutions for the 2019 Canadian federal election.[1] KI Social is a suite of tools designed to support three main areas of Electoral Management…

Wael Hassan