Data for Democracy Project

Monitoring Elections The Data for Democracy Project aims to identify and recommend strategies, tools, and technology to protect democratic processes and systems from social media misinformation and disinformation. By creating a technology solution that permits observers to monitor election interference, we offer a unique and bipartisan approach to election monitoring. Built by experts in technology,…

5 Questions About Elections and Disinformation

With a US election campaign ramping up, many people around the world are paying attention to the impact of misinformation and disinformation on democracy. Electoral management bodies, including election officials at all levels of government, are looking to social media monitoring to battle election interference through disinformation campaigns. Three types of attacks are commonly seen…

MONITORING POLITICAL FINANCING ISSUES ON SOCIAL MEDIA — PART V

Monitoring Online Discourse During An Election: A 5-Part Series How social medial monitoring can help Electoral Management Bodies to ascertain, measure, and validate political spending. How politicians and their supporters invest in political messaging is rapidly changing. For the last few years, the amount of money spent on political advertising on the Internet has been…

Monitoring Online Discourse During An Election: A 5-Part Series

PART I: INTRODUCTION Online interference with elections is a reality of 21st century politics. Social media disinformation campaigns have targeted citizens of democracies across the globe and impacted public perceptions and poll results, often dramatically. Disinformation: False information spread with the intent to deceive. Misinformation: Inaccurate information spread without an intent to deceive. Political campaigns,…

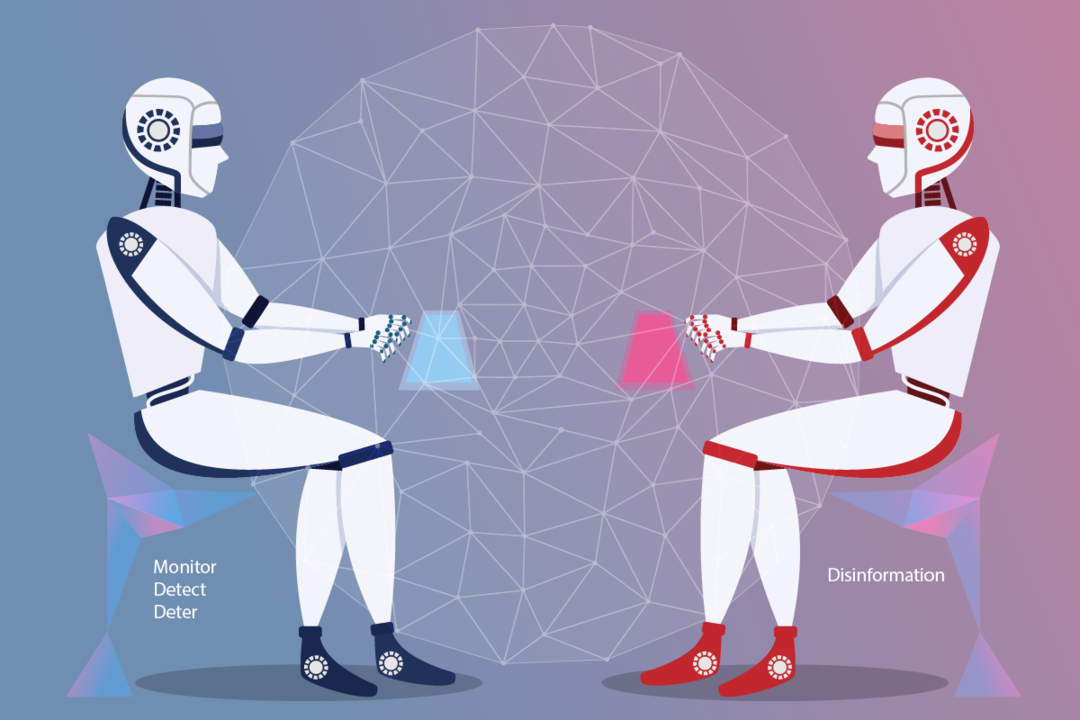

Using AI to Combat AI-Generated Disinformation

AI can be impact election outcomes? how can this be combatted?